Electronic Stalkers Forge Images and Sounds in the Dark Web's Corners

A glimpse into the ominous future in the "Presence of Artificial Intelligence".

When the "Louisiana Parole Board" convened last October to decide on the potential release of a convicted murderer, they called upon a doctor with years of experience in mental health to testify about the prisoner's condition.

Electronic Stalkers Are Watching You

The Parole Board was not the only group listening intently. A number of electronic stalkers had identified the doctor and captured images from her electronic testimony; they then manipulated the images using artificial intelligence tools to appear as if she were nude and shared the altered file on "4chan," a message board known for its support of harassment, dissemination of hate content, and conspiracy theories.

This was one of several instances where "4chan" users utilized modern artificial intelligence tools, such as voice modifiers and image generators, to disseminate racist and offensive content against individuals who appeared before the Parole Board, according to Daniel Siegel, a Columbia University student currently studying the exploitation of artificial intelligence for nefarious purposes. Siegel documented the activity on the site for several months.

Artificial Intelligence Tools for Cyber Harassment

The researcher confirmed that the altered images and recordings did not spread beyond "4chan." Experts monitoring the site believe these efforts provide a preview of how malicious internet users will employ advanced artificial intelligence tools for cyber harassment and hate campaigns in the months and years to come.

Calum Hood, Head of Research at the Center for Countering Digital Hate, stated that fringe sites like "4chan" (arguably the worst) often provide early signals for new methodologies utilizing emerging technologies to spread extremist ideologies. He explained that these platforms are filled with young people eager to adopt new technologies such as artificial intelligence and inject their beliefs into more widely used spaces. These tactics are often adopted by some users or more well-known electronic platforms.

Fake Images and Countermeasures

Below we outline a number of problems caused by artificial intelligence tools as discovered by experts on "4chan" and the steps being taken by law enforcement and technology companies in response.

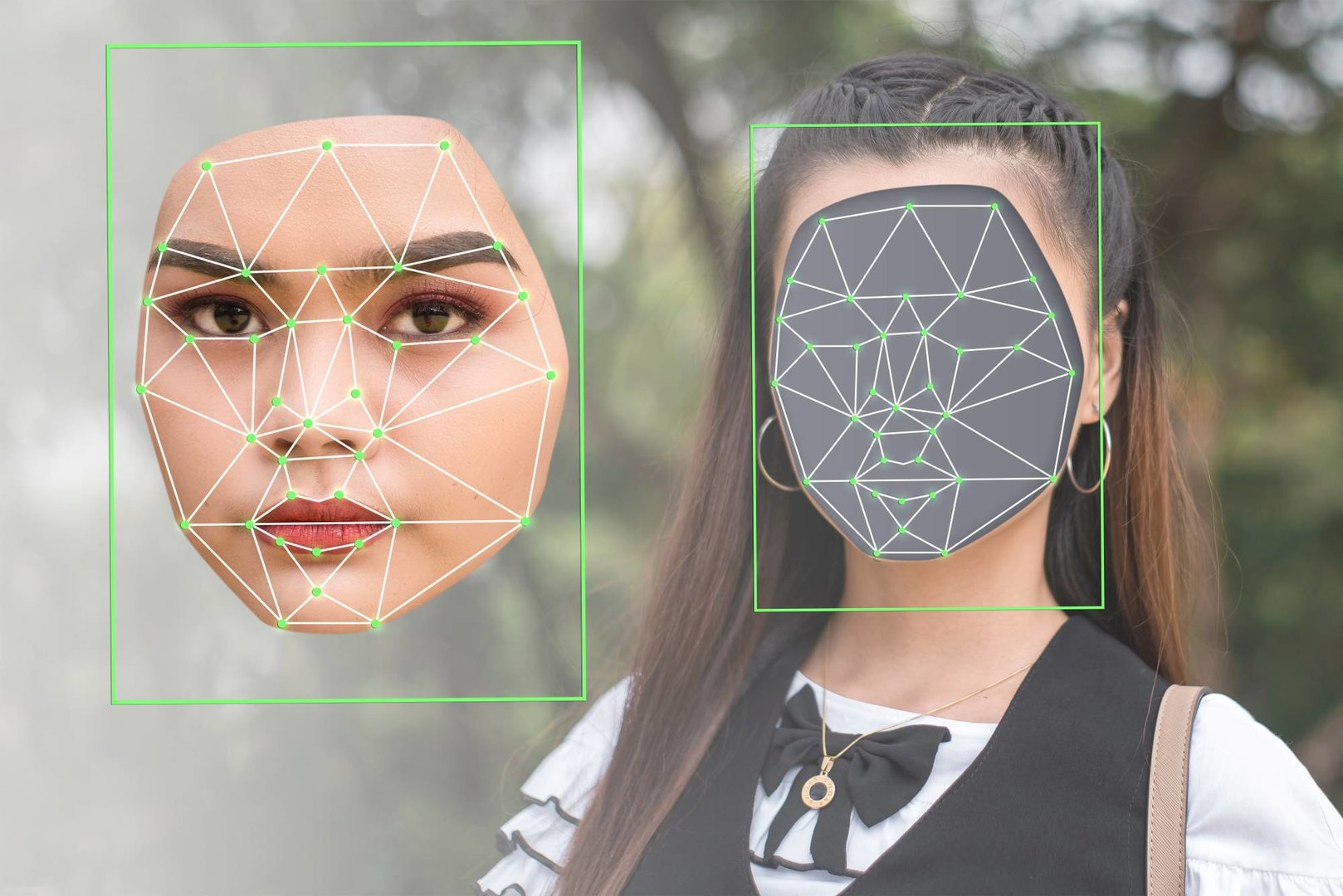

* Synthetic images and artificial intelligence-generated pornographic content. AI tools like "Dall-E" and "Midjourney" generate new images from simple text descriptions. However, a new wave of image generators, specifically designed for fabricating fake pornographic content, can easily remove clothing from existing images.

Commenting on hate and misinformation campaigns, Hood said, "These campaigns can use AI to fabricate exactly the image you want."

There is no federal law in the United States that prevents the creation of fake images of people, leaving concerned entities like the Louisiana Parole Board in a quandary when dealing with such matters. The board opened an investigation after Siegel's findings about "4chan" came to light.

Frances Abbott, the board's executive director, said, "We will definitely take action against any fabricated images that negatively portray our members or participants in our sessions. However, we must act according to the law, which means determining whether these images are illegal is the responsibility of someone else."

Illinois has broadened a law governing revenge pornography to allow individuals targeted with pornographic materials made without their consent using artificial intelligence systems to sue those who created or distributed it. California, Virginia, and New York have also passed laws prohibiting the distribution or manufacturing of pornographic content using artificial intelligence without the consent of the parties involved.

* Voice cloning. AI company "ElevenLabs" last year released a tool that produces convincing voice copies that can say anything the user types into the program.

Shortly after the tool's release, "4chan" users posted fake audio clips of British actress Emma Watson reading an official statement by Adolf Hitler.

They also used content from Louisiana Parole Board hearing sessions to fabricate fake clips of judges directing derogatory and racist remarks towards defendants. Many of these clips were created using the "ElevenLabs" tool, according to Siegel, who employed an AI-generated voice detection tool developed by the company itself to investigate the origins of the clips.

"ElevenLabs" quickly imposed controls on its tool, requiring users to pay a fee before allowing them to access the voice-cloning tools. However, experts believe that these changes have not succeeded in slowing the spread of fake AI-generated voices. Platforms like "YouTube" and "TikTok" have seen the proliferation of fake celebrity voices, most of which share misleading political information.

Many platforms, including "TikTok" and "YouTube," have since imposed the use of identifying tags on some AI content.

Last October, U.S. President Joe Biden signed an executive order mandating all companies to tag this type of content and directed the Department of Commerce to develop standards for watermarking and authenticating AI content.

Dedicated Artificial Intelligence Tools

As Meta advances in the AI race, the company has adopted a strategy of making its code available to researchers. This open-source approach is designed to accelerate development by giving academics and tech scientists access to more foundational materials, allowing them to improve and develop their own tools.

When Meta released its large language model "Lama" to a select group of researchers in February, the code was immediately leaked on "4chan," where people used it for entirely different purposes. They modified the code to reduce or even eliminate protective measures, creating new chatbots capable of generating antisemitic content.

These efforts offer a proactive glimpse into the modifications and changes that free and open-source AI tools might undergo at the hands of users with advanced technical skills.

Commenting on the incident, a Meta spokesperson said in an email, "It is true that the model is not available to everyone, and that some have tried to circumvent the process for approval, but we believe that our current publication strategy helps us achieve a balance between responsibility and openness."

In the months following the incident, linguistic models were developed for disseminating extreme right-wing views or creating pornographic content, and "4chan" users continued to modify image generators to produce nude images and promote racist jokes, circumventing the controls imposed by major tech companies.

Electronic Stalkers Are Watching You

The Parole Board was not the only group listening intently. A number of electronic stalkers had identified the doctor and captured images from her electronic testimony; they then manipulated the images using artificial intelligence tools to appear as if she were nude and shared the altered file on "4chan," a message board known for its support of harassment, dissemination of hate content, and conspiracy theories.

This was one of several instances where "4chan" users utilized modern artificial intelligence tools, such as voice modifiers and image generators, to disseminate racist and offensive content against individuals who appeared before the Parole Board, according to Daniel Siegel, a Columbia University student currently studying the exploitation of artificial intelligence for nefarious purposes. Siegel documented the activity on the site for several months.

Artificial Intelligence Tools for Cyber Harassment

The researcher confirmed that the altered images and recordings did not spread beyond "4chan." Experts monitoring the site believe these efforts provide a preview of how malicious internet users will employ advanced artificial intelligence tools for cyber harassment and hate campaigns in the months and years to come.

Calum Hood, Head of Research at the Center for Countering Digital Hate, stated that fringe sites like "4chan" (arguably the worst) often provide early signals for new methodologies utilizing emerging technologies to spread extremist ideologies. He explained that these platforms are filled with young people eager to adopt new technologies such as artificial intelligence and inject their beliefs into more widely used spaces. These tactics are often adopted by some users or more well-known electronic platforms.

Fake Images and Countermeasures

Below we outline a number of problems caused by artificial intelligence tools as discovered by experts on "4chan" and the steps being taken by law enforcement and technology companies in response.

* Synthetic images and artificial intelligence-generated pornographic content. AI tools like "Dall-E" and "Midjourney" generate new images from simple text descriptions. However, a new wave of image generators, specifically designed for fabricating fake pornographic content, can easily remove clothing from existing images.

Commenting on hate and misinformation campaigns, Hood said, "These campaigns can use AI to fabricate exactly the image you want."

There is no federal law in the United States that prevents the creation of fake images of people, leaving concerned entities like the Louisiana Parole Board in a quandary when dealing with such matters. The board opened an investigation after Siegel's findings about "4chan" came to light.

Frances Abbott, the board's executive director, said, "We will definitely take action against any fabricated images that negatively portray our members or participants in our sessions. However, we must act according to the law, which means determining whether these images are illegal is the responsibility of someone else."

Illinois has broadened a law governing revenge pornography to allow individuals targeted with pornographic materials made without their consent using artificial intelligence systems to sue those who created or distributed it. California, Virginia, and New York have also passed laws prohibiting the distribution or manufacturing of pornographic content using artificial intelligence without the consent of the parties involved.

* Voice cloning. AI company "ElevenLabs" last year released a tool that produces convincing voice copies that can say anything the user types into the program.

Shortly after the tool's release, "4chan" users posted fake audio clips of British actress Emma Watson reading an official statement by Adolf Hitler.

They also used content from Louisiana Parole Board hearing sessions to fabricate fake clips of judges directing derogatory and racist remarks towards defendants. Many of these clips were created using the "ElevenLabs" tool, according to Siegel, who employed an AI-generated voice detection tool developed by the company itself to investigate the origins of the clips.

"ElevenLabs" quickly imposed controls on its tool, requiring users to pay a fee before allowing them to access the voice-cloning tools. However, experts believe that these changes have not succeeded in slowing the spread of fake AI-generated voices. Platforms like "YouTube" and "TikTok" have seen the proliferation of fake celebrity voices, most of which share misleading political information.

Many platforms, including "TikTok" and "YouTube," have since imposed the use of identifying tags on some AI content.

Last October, U.S. President Joe Biden signed an executive order mandating all companies to tag this type of content and directed the Department of Commerce to develop standards for watermarking and authenticating AI content.

Dedicated Artificial Intelligence Tools

As Meta advances in the AI race, the company has adopted a strategy of making its code available to researchers. This open-source approach is designed to accelerate development by giving academics and tech scientists access to more foundational materials, allowing them to improve and develop their own tools.

When Meta released its large language model "Lama" to a select group of researchers in February, the code was immediately leaked on "4chan," where people used it for entirely different purposes. They modified the code to reduce or even eliminate protective measures, creating new chatbots capable of generating antisemitic content.

These efforts offer a proactive glimpse into the modifications and changes that free and open-source AI tools might undergo at the hands of users with advanced technical skills.

Commenting on the incident, a Meta spokesperson said in an email, "It is true that the model is not available to everyone, and that some have tried to circumvent the process for approval, but we believe that our current publication strategy helps us achieve a balance between responsibility and openness."

In the months following the incident, linguistic models were developed for disseminating extreme right-wing views or creating pornographic content, and "4chan" users continued to modify image generators to produce nude images and promote racist jokes, circumventing the controls imposed by major tech companies.