Apple, Google, Amazon spying on you, lawsuits claim

Big Tech is listening to your private discussions, lawsuits claim. Should you be worried?

A federal judge has given a green light for a class-action lawsuit claiming that Apple's Siri voice assistant violates users’ privacy.

Earlier this month, U.S. District Judge Jeffrey White said the plaintiffs would be allowed to move forward with lawsuits trying to prove that Siri routinely recorded their private conversations because of "accidental activations" and that Apple provided the conversations to advertisers, according to Reuters. The plaintiffs claim that Apple violated the federal Wiretap Act and California privacy law, among other claims.

Separate lawsuits against Google and Amazon make similar claims about voice assistants. One of the most common claims cited in the lawsuits is that conversations were recorded without user consent and then used by advertisers to target the plaintiffs.

This is happening against a backdrop of surging smart speaker sales.

As of June 2021, the installed base of smart speakers in the U.S. reached 126 million units, jumping from 20 million units in June 2017, according to Consumer Intelligence Research Partners (CIRP).

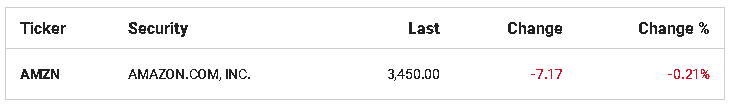

Amazon has the biggest slice of the installed base, with 69% as of June of this year.

"The installed base of smart speakers grew considerably during the COVID-19 pandemic, adding over 25 million units in the past year," said Josh Lowitz, CIRP Partner and Co-Founder in a statement.

Should you be worried? How to protect yourself

Amazon, Apple and Google all offer smart speakers that use variations of voice assistant technology that is activated when users say key words such as "Hey Siri" for Apple devices or "OK Google" for Google products or "Alexa" for Amazon smart devices.

Amazon devices store that data when activated with a key word or so-called wake word. "No audio is stored or sent to the cloud unless the device detects the wake word (or Alexa is activated by pressing a button)," an Amazon spokesperson told FOX Business in an email.

"Customers have several options to manage their recordings, including the option to not have their recordings saved at all and the ability to automatically delete recordings on an ongoing three- or 18-month basis," the spokesperson added.

If you don’t want to be recorded by Alexa, in the Alexa app go into the "Privacy" menu. Then go to "Manage your Alexa data" then "Choose how long to save recordings." Then select "Don’t save recordings."

Amazon collects and uses voice recordings to deliver and improve services, according to the company. This includes helping train Alexa to better understand different accents and dialects and to provide the right response to requests.

Amazon also said it "manually" reviews data but does not sell it to third parties.

"To help improve Alexa, we manually review and annotate a small fraction of one percent of Alexa requests. Access to human review tools is only granted to employees who require them to improve the service," the Amazon spokesperson said.

"Our annotation process does not associate voice recordings with any customer identifiable information. Customers can opt-out of having their voice recordings included in the fraction of one percent of voice recordings that get reviewed," the spokesperson said.

By default, Google doesn’t retain your audio recordings, José Castañeda, a Google Spokesperson, told Fox Business. "We dispute the claims in this case and will vigorously defend ourselves," Castañeda said in a statement.

However, if you want to confirm that the Google setting is off, go to your Google account and then to "Data and Privacy" then "Web & App Activity" and make sure the box is unchecked next to "Include audio recordings." The default setting is unchecked.

Apple no longer retains Siri recordings without user permission, according to an Apple statement made in 2019. Siri will only retain your data if you choose to opt-in via settings on Apple devices.

Amazon would not comment on the lawsuit, and Apple has yet to respond to a request for comment.